Since 2013, I have also been involved in a study trying to determine whether there is a distinctive interrelationship between user’s mood, their perception of colors and their influence on the perception of music, especially in terms of the perceived and induced emotions. We published several conference papers including a paper at ISMIR 2014. Along with the study, we gathered a significant amount of data including 741 users and 7000 user responses on a set of 200 songs. The Moodo dataset is freely available (here) and includes users’ current mood, their perception of emotion labels in terms of the valence and arousal (VA space is based on Russell’s circumplex model of affect), and their responses to the music excerpt – the best fitting color of a music excerpt, the perceived and induced emotions, and a valence-arousal point for each present emotion).

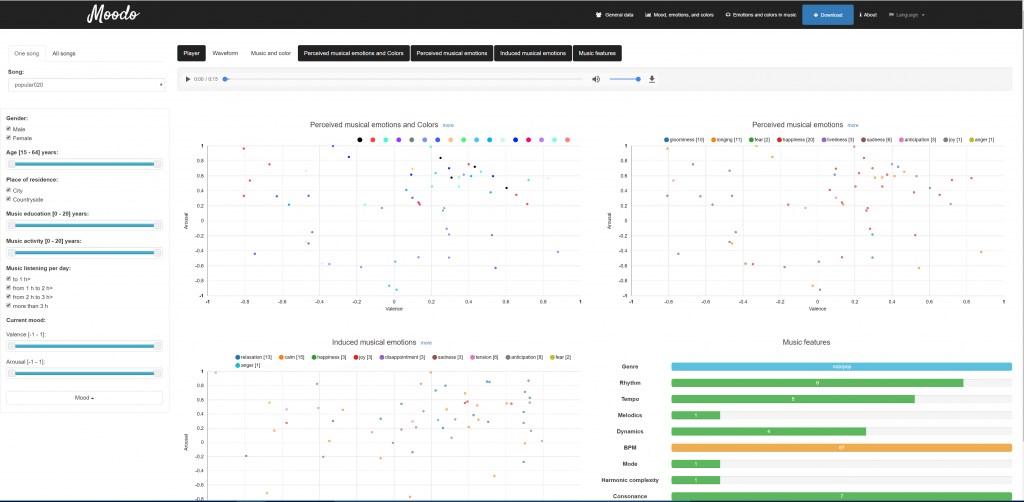

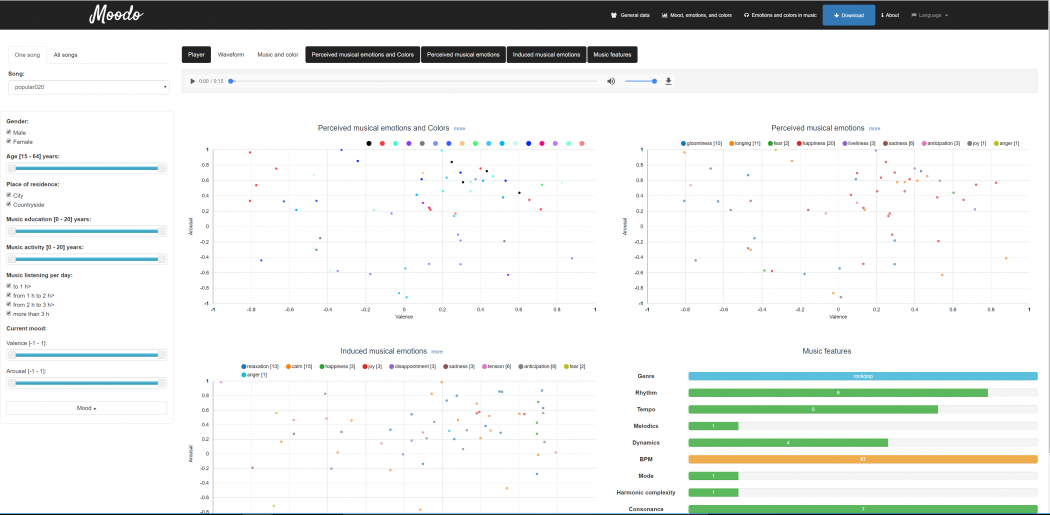

We recently published a visualization of the Moodo dataset, which is also available (works best in Chrome browser): http://moodo.musiclab.si/. The visualization is dynamic – user may filter-out specific data, limit the parameters of gathered responses etc …

Moodo Visualization – mood perception in music context (a single song)

In 2014, we also focused on the context dependent user experience. We recently published a book chapter titled “Towards a Personalised and Context-Dependent User Experience in Multimedia and Information Systems“, with an overview of the initial study and a small experiment trying to determine which features can be used for mood estimation.

The book chapter is available here: http://link.springer.com/chapter/10.1007/978-3-319-49407-4_8.

Based on that study, we continued to analyze the Moodo dataset in terms of User-aware music information retrieval. The advanced analysis includes the emotional mediation of color and music, genre specificity of musically perceived emotions, and the influence of the additional user context: mood, gender and musical education. We gathered the results in a second book chapter, published here: http://link.springer.com/chapter/10.1007%2F978-3-319-31413-6_16.

Feel free to check the chapters. If you want to discuss our analyses or get an update on our mood-related research, feel free to contact me.

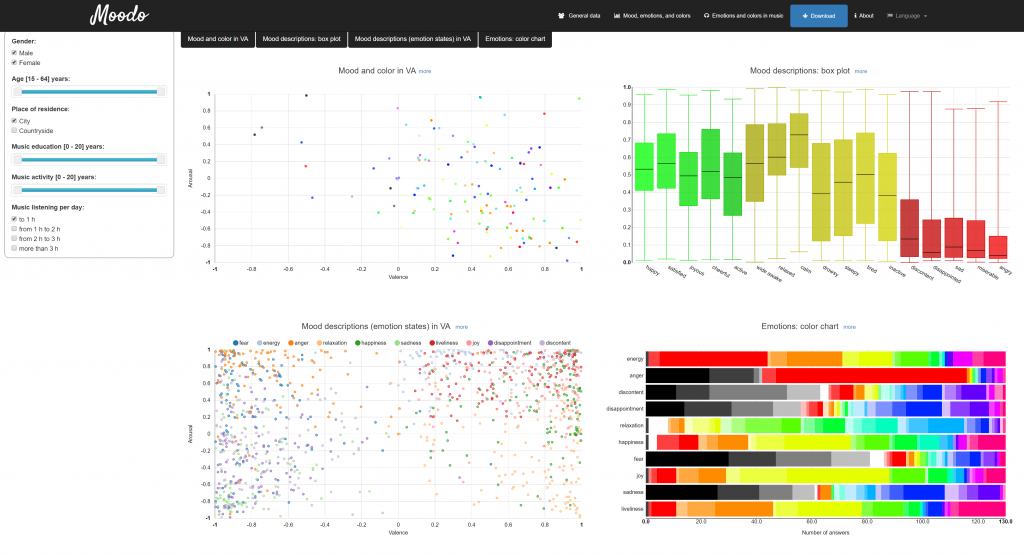

Moodo visualization – user perception of emotions without music context

Both book chapter abstracts are also available below:

Towards a Personalised and Context-Dependent User Experience in Multimedia and Information Systems

Advances in multimedia and information systems have shifted the focus from general content repositories towards personalized systems. Much effort has been put into modeling and integration of affective states with the purpose of improving overall user experience and functionality of the system. In this chapter, we present a multi-modal dataset of users’ emotional and visual (color) responses to music, with accompanying personal and demographic profiles, which may serve as the knowledge basis for such improvement. Results show that emotional mediation of users’ perceptive states can significantly improve user experience in terms of context-dependent personalization in multimedia and information systems.

The book chapter is available here: http://link.springer.com/chapter/10.1007/978-3-319-49407-4_8.

Towards User-Aware Music Information Retrieval: Emotional and Color Perception of Music

This chapter presents our findings on emotional and color perception of music. It emphasizes the importance of user-aware music information retrieval (MIR) and the advantages that research on emotional processing and interaction between multiple modalities brings to the understanding of music and its users. Analyses of results show that correlations between emotions, colors and music are largely determined by context. There are differences between emotion-color associations and valence-arousal ratings in non-music and music contexts, with the effects of genre preferences evident for the latter. Participants were able to differentiate between perceived and induced musical emotions. Results also show how associations between individual musical emotions affect their valence-arousal ratings. We believe these findings contribute to the development of user-aware MIR systems and open further possibilities for innovative applications in MIR and affective computing in general.

The book chapter is available here: http://link.springer.com/chapter/10.1007%2F978-3-319-31413-6_16.

No Comments